Erlang取当前时间的瓶颈以及解决方案

高性能网络服务器通常会涉及大量和时间相关的场景和操作,比如定时器,读取事件的发生时间,日志等等。

erlang提供了二种方式来获取时间:

1. erlang:now()

2. os:timestamp()

获取取到时间后,我们通常用calendar:now_to_universal_time来格式化类似”{{2013,11,4},{8,46,20}}”这样人可读的时间。

由于时间调用非常的频繁,而且通常发生在关键路径上,所以效率和性能就非常值得深挖了。

我们先来看下这二个函数的说明:

erlang:now 参看这里

now() -> Timestamp

Types:

Timestamp = timestamp()

timestamp() =

{MegaSecs :: integer() >= 0,

Secs :: integer() >= 0,

MicroSecs :: integer() >= 0}

Returns the tuple {MegaSecs, Secs, MicroSecs} which is the elapsed time since 00:00 GMT, January 1, 1970 (zero hour) on the assumption that the underlying OS supports this. Otherwise, some other point in time is chosen. It is also guaranteed that subsequent calls to this BIF returns continuously increasing values. Hence, the return value from now() can be used to generate unique time-stamps, and if it is called in a tight loop on a fast machine the time of the node can become skewed.It can only be used to check the local time of day if the time-zone info of the underlying operating system is properly configured.

If you do not need the return value to be unique and monotonically increasing, use os:timestamp/0 instead to avoid some overhead.

os:timestamp 参看这里

timestamp() -> Timestamp

Types:

Timestamp = erlang:timestamp()

Timestamp = {MegaSecs, Secs, MicroSecs}

Returns a tuple in the same format as erlang:now/0. The difference is that this function returns what the operating system thinks (a.k.a. the wall clock time) without any attempts at time correction. The result of two different calls to this function is not guaranteed to be different.The most obvious use for this function is logging. The tuple can be used together with the function calendar:now_to_universal_time/1 or calendar:now_to_local_time/1 to get calendar time. Using the calendar time together with the MicroSecs part of the return tuple from this function allows you to log timestamps in high resolution and consistent with the time in the rest of the operating system.

但是事情没这么简单!

由于erlang支持时间纠正机制,简单的说在时间发生突变的时候,还能维持正常的时间逻辑,具体的实现参看这篇:服务器时间校正思考。

时间纠正机制让事情变得复杂,这个时间纠正机制如何禁止呢:

+c

Disable compensation for sudden changes of system time.Normally, erlang:now/0 will not immediately reflect sudden changes in the system time, in order to keep timers (including receive-after) working. Instead, the time maintained by erlang:now/0 is slowly adjusted towards the new system time. (Slowly means in one percent adjustments; if the time is off by one minute, the time will be adjusted in 100 minutes.)

When the +c option is given, this slow adjustment will not take place. Instead erlang:now/0 will always reflect the current system time. Note that timers are based on erlang:now/0. If the system time jumps, timers then time out at the wrong time.

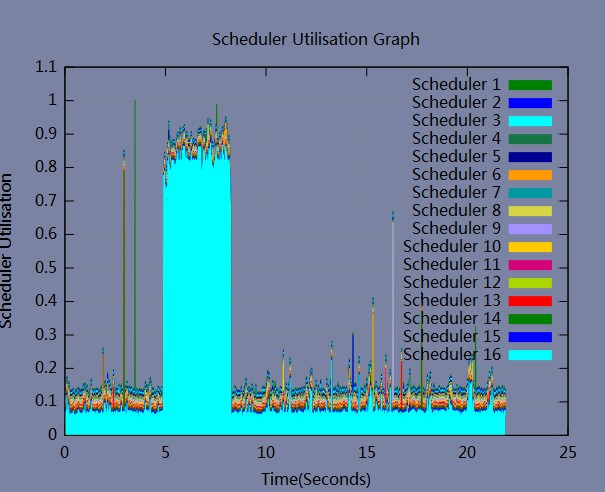

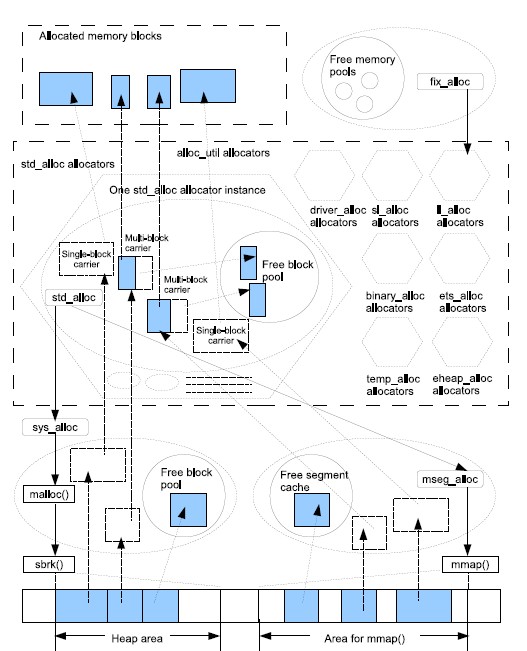

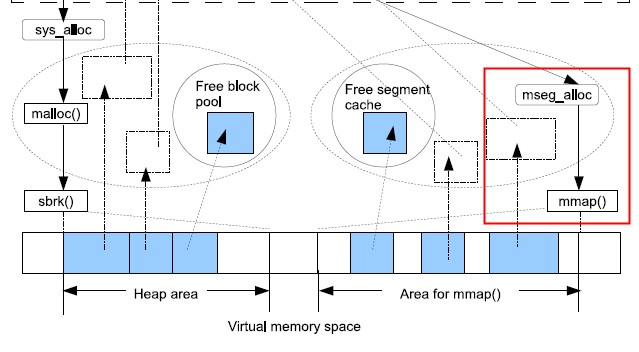

正是由于时间纠正机制的存在,所以服务器需要不时的修正时间,同一时刻可能还有很多线程在读取时间,为了维护时间的一致性,需要有个锁来保护。

我们来看下相关的代码实现:

Read more…

Recent Comments